nilGPT v5: Web Search & PDF Uploads

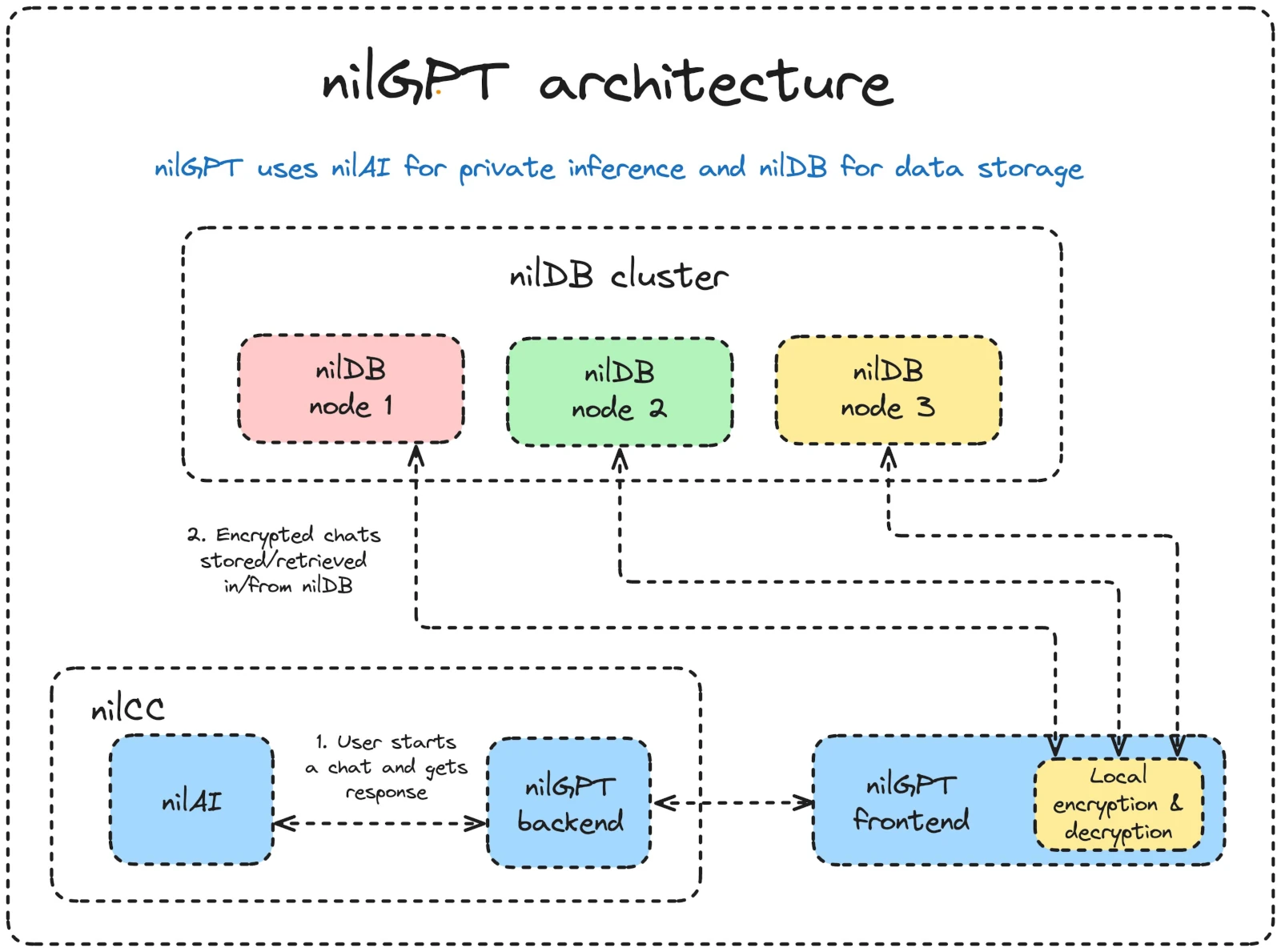

Another week, another release of nilGPT and another step toward building the most capable, private AI assistant on the market. We're keeping the pace high, shipping features that bring nilGPT closer to feature parity with state-of-the-art chatbots, while always staying true to our core value: privacy first.

This release focuses on two major new capabilities: web search and PDF uploads.

Major Features

Web Search

One of the most requested features has arrived. nilGPT now supports web search, made possible by a new capability recently introduced in nilAI. This capability is powered by Brave's API, chosen for its stronger privacy protections.

By default, web search is turned off in nilGPT - you'll need to toggle it on to use it. When enabled, please be aware that sensitive query data may be visible to Brave servers. While nilAI's optimisation pipeline summarises queries before sending them, we cannot guarantee that all sensitive information is stripped away. We are in fact working on an "AI proxy" to remove or anonymise PII from queries which would increase the privacy of the websearch feature even more.

For now, web search is limited to 20 queries per user per day as we refine performance and gather your feedback. Even in this early stage, it extends nilGPT's reach beyond its training data, pulling in fresh answers and information directly from the web.

PDF Uploads

We've also added support for secure PDF uploads, allowing you to drop in documents and query them directly in nilGPT.

Initially this feature was designed to accelerate workflows for professionals who handle large volumes of text - from legal contracts to academic research. But it's just as valuable for everyday use cases, like quickly checking an employment agreement for key clauses you should pay close attention to. These are exactly the kinds of scenarios where truly private AI shows its worth, delivering both security and convenience.

As always, we have also fixed a number of bugs and introduced small quality-of-life improvements to keep the overall experience smooth.

Looking Ahead

We're excited about this release. It pushes nilGPT closer to the cutting edge while staying firmly grounded in our commitment to privacy. We're particularly interested to see how industry professionals adopt PDF analysis in their work, and we welcome community feedback as we continue to refine web search.

We're shipping fast, and our vision remains clear: to make nilGPT the go-to private AI chatbot.